AI and Mental Health Debate

AI and Mental Health Debate

Be it resolved, the future of mental health is big data.

A Facebook algorithm that tracks posts for suicidal thoughts; an app that monitors the speed of keyboard strokes for signs of depression; a computer program that analyzes our facial expressions and tone of voice when we Facetime. These are a few of the thousands of algorithms tracking our mental health that some experts say could revolutionize how we diagnose and treat mental illness. They say that our 24/7 use of digital devices is generating a goldmine of information about our mental state that must be accessible to mental health practitioners if psychiatric medicine is to operate like a scientific discipline in the 21st century. Instagram posts, text logs, Google searches, and GPS data, and not psychiatrists’ observations and intuitions based on conversation, offer the detail and time stamped precision we need to generate tailored and effective treatments to the millions of individuals who desperately need help in the post pandemic world.

Critics say the problems with this big data approach go far beyond the obvious privacy issues that come with outsourcing mental health monitoring to digital monopolies like Google and Apple. The push for mental health algorithms reflects a reductive view of human emotions that undermines the strengths of the traditionally human centred field of psychiatric medicine. Diagnoses based on dialogue between two individuals and grounded in intuition and empathy will always be better than machine intelligence at drawing out the personal histories that explain trauma and generate helpful treatment. Engaging machines to address the mental health crisis is nothing but a quick fix solution that only helps the deeply under resourced health systems of our world today.

Pro

Con

You may also like

April 28, 2026

– Listen

Munk Dialogue with Andrew Coyne: Mark Carney’s new sovereign wealth fund is a solution in search of a problem

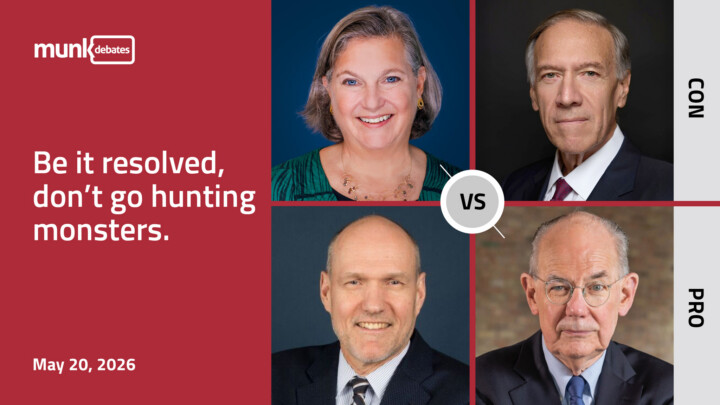

May 20, 2026

– Watch

Foreign Wars Debate

May 12, 2026

– Listen

Munk Dialogue with Andrew Coyne: A weakened President needs China’s help and a debate over the new Governor General

March 31, 2026

– Listen

Munk Dialogue with Andrew Coyne: Trump’s mixed messages on Iran and the NDP elects a new leader

May 5, 2026

– Listen

Munk Dialogue with Andrew Coyne: America and Iran inch closer to war, Ukraine proves itself worthy of NATO, and Canada moves closer to Europe

March 25, 2026

– Listen

Munk Dialogue with Andrew Coyne: at this point, what is Iran’s incentive to negotiate?

February 24, 2026

– Listen

Munk Dialogue with Andrew Coyne: Trump shakes his fist at the court and will AI take everyone’s jobs?

March 3, 2026

– Listen

Munk Dialogue with Andrew Coyne: Trump strikes Iran without a strategy

April 14, 2026

– Listen

Munk Dialogue with Andrew Coyne: Mark Carney gets his Liberal Majority

April 7, 2026

– Watch

Gene Editing Debate

April 9, 2026